Friday, March 6th, 2026

How We Model Clinical Trial Data When Every Trial Is Different

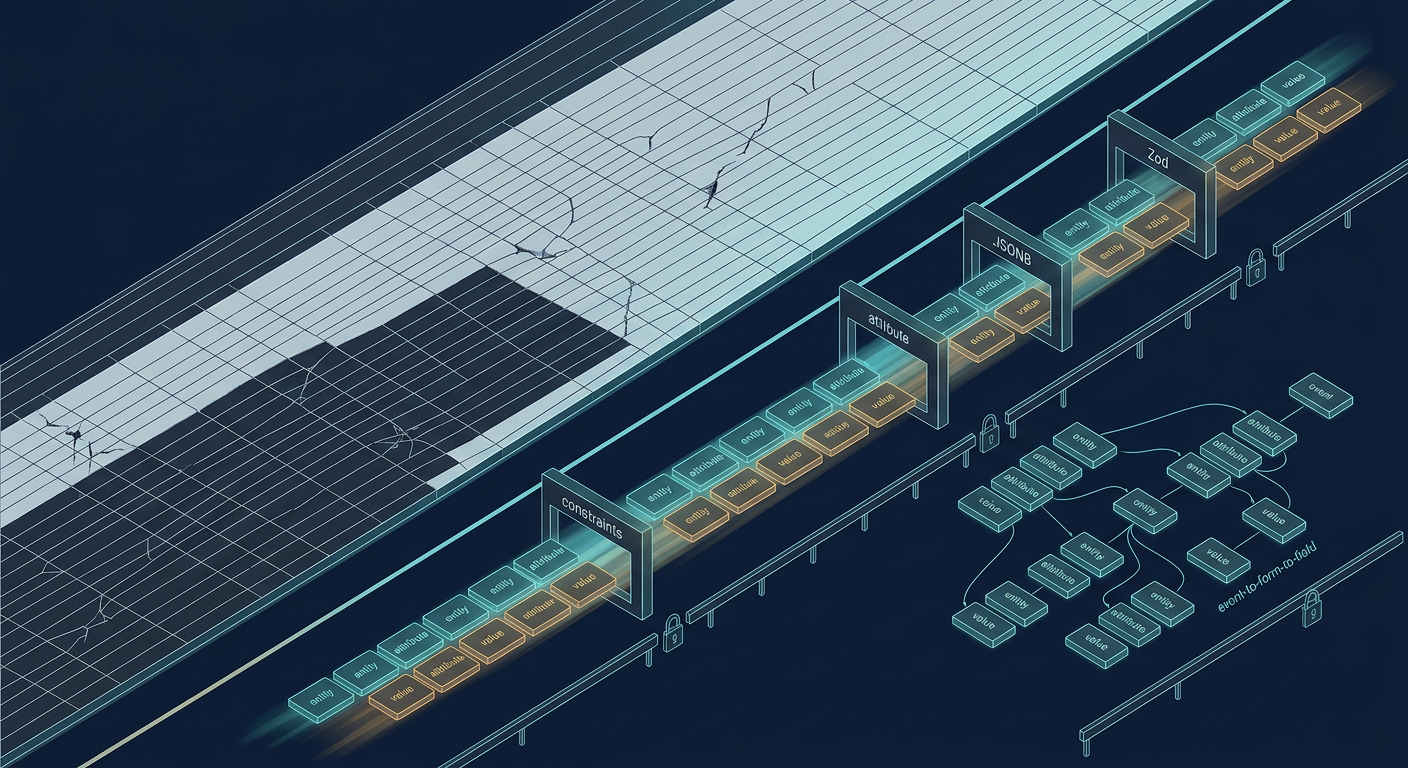

When every clinical trial has a different data model, relational databases struggle with changes while NoSQL trades off safety for flexibility. Here's how Harbor uses a constrained EAV model in Postgres, taking advantage of relational guardrails and Zod-powered app-layer validation to support sparse, user-defined, constantly-evolving clinical data schemas — without compromising safety or integrity.

Every clinical trial has a different data model. A smaller, simpler study might have one or two forms collecting subject demographics and vitals. A larger study might have dozens, with conditional logic that changes the required fields based on previous responses or assigned treatment arm.

Broadly, clinical data is characterized by:

- Sparsity. Not all subjects will have responses to all questions (e.g., due to conditional logic or early study exit).

- Heterogeneity. The data model is different for each study.

- Dynamism. The data model can potentially change mid-study (e.g., a question needs to be added or revised).

- User-definition. The exact data model is ultimately defined by the user, not by the developer.

No matter the size or shape of the data, though, it generally needs to end up in a 21 CFR Part 11-compliant electronic database — like Harbor — for eventual submission to the FDA. But if we don't know the schema in advance, how do we support all the different possible sizes and shapes? Standard web databases are not built for this sort of dynamism. NoSQL alternatives are more flexible, but at the cost of weakened safety and integrity guarantees: a dangerous bargain to make in a regulated domain like clinical trials.

Harbor takes a hybrid approach to combine the best of both worlds: we build on a strong relational foundation to maximize safety and integrity, escape to NoSQL-like flexibility when necessary, and rely on application-layer validation as a secondary line of defense:

- PostgreSQL and EAV. We begin with the proven foundation of PostgreSQL. Relational constraints enforce high-level business rules and invariants. Actual clinical data, however, is stored using a careful application of the NoSQL-esque "entity-attribute-value" (EAV) pattern — more on this below — to ergonomically handle sparse, heterogeneous, dynamic, user-defined clinical data schemas.

- JSONB and Zod. Data values are consistently and compactly stored using PostgreSQL's JSONB column type, but otherwise, the database doesn't enforce any specific type per se. Instead, values are parsed and validated with Zod at the application layer on all read and write paths.

This combination of Postgres, EAV, JSONB, and Zod gives us relational integrity and type safety while also enabling the flexibility required for real-world clinical research.

What is EAV?

If you're not familiar, EAV stands for "entity-attribute-value", and refers to a dynamic, flexible data model that doesn't require fixed types or schemas up front. EAV is composed of:

- Entity. The object for which we are recording data. In the case of clinical trial data, this is usually a specific subject (e.g., "SUBJECT_101").

- Attribute. The "name" of the data we are recording. Alternatively, the "question" that is being asked. For example: the "Weight" field on the "Vitals" form that is meant to be filled out during the "Screening Visit" event (e.g., "SCREENING_VISIT_VITALS_WEIGHT").

- Value. The "answer," i.e., the actual data. For example: 58.3 kg.

In the database, the records might look something like this:

| entity | attribute | value |

|---|---|---|

| SUBJECT_101 | SCREENING_VISIT_VITALS_WEIGHT | "58.3" |

| SUBJECT_101 | SCREENING_VISIT_VITALS_HEIGHT | "1.72" |

| SUBJECT_102 | SCREENING_VISIT_VITALS_WEIGHT | "75.0" |

EAV's flexibility is tailor-made for clinical data:1

- Sparsity. If a subject doesn't have a response to a question, then no row needs to be stored in the database.

- Heterogeneity. Attribute names can be anything; each study can define its own attributes ("questions") and value types ("answers").

- Dynamism: If your study protocol changes and you need to collect a new piece of data going forward, you can simply define a new attribute and it will work.

- User-definition. Since attributes can be arbitrary, there is no issue with having users define their own.

On the other hand, in a traditional relational approach, the database might have to look something like this:

| subject_id | screening_visit_vitals_weight | screening_visit_vitals_height | etc | etc |

|---|---|---|---|---|

| SUBJECT_101 | 58.3 | 1.72 | null | null |

| SUBJECT_102 | 75.0 | null | null | null |

Tables would need to be extremely wide, as there would have to be a separate column for each possible attribute — and most columns would be null, taking up space and adding no value. Every time the study data model changed, columns would need to be added or removed with a full-blown database migration. And each study would have a different set of columns.

EAV allows us to avoid this awkwardness and operational overhead. That said, the naive approach effectively rebuilds NoSQL inside of SQL, and discards many of the safety guarantees of the traditional relational approach:

- Entities and attributes are completely unconstrained; any string can be used as an entity or attribute name.

- Values, similarly, are completely unconstrained and untyped.

Making EAV Safe and Reliable

To mitigate these issues with EAV at Harbor, we first take advantage of the fact that we are building on the foundation of a relational database. In particular:

- Instead of storing entities (i.e., subjects) and attributes as unconstrained strings, we add foreign keys pointing to separate subjects and attributes tables.

- We use relational constraints to enforce that attribute hierarchies are always valid.

To elaborate on the second point above, "attributes" in Harbor aren't just strings; rather, they are leaves in a user-defined hierarchy of events, forms, and fields:

- Events. The data collection occasions in the study schedule, like "Screening Visit" or "Week 4 Visit."

- Forms. The data collection forms that are part of an event, like a "Demographics" or "Vital Signs" form. Contain an

event_idforeign key to the event it is a part of. - Fields. The individual data fields that are part of a form, like "Age" or "Race" fields on a "Demographics" form. Contain a

form_idforeign key to the form it is a part of and atypecolumn that is an enum of the possible field types (e.g., number, text, date, Yes/No, etc.).

Thus, in our system, an "attribute" would be a specific field, contained in a specific form, contained in a specific study event; e.g., the "Age" field in the "Demographics" form for the "Screening Visit" event. This hierarchy is stored relationally, with separate tables for events, forms, and fields.

Internally, we call a specific manifestation of an attribute an "instance," since attributes can be duplicated — for example, if an event is scheduled to occur twice in a study. When setting the attribute value, we create a new "instance" record for each level of the attribute hierarchy:

- Event Instance. The record for a specific instance of an event, like the "Screening Visit" for a specific subject that occurred on a specific date. Contains an

event_idforeign key to the event of which it is an instance. - Form Instance. The record for a specific instance of a form within an event instance, like the "Demographics" form for the "Screening Visit" instance. Contains a

form_idforeign key to the form of which it is an instance and anevent_instance_idforeign key to its parent event instance. - Field Instance. The record for a specific instance of a field, like the "Age" field within a "Demographics" form instance. Contains a

field_idforeign key to the field of which it is an instance and aform_instance_idforeign key to its parent form instance.

Actual attribute "values" are ultimately stored at the field instance level. Concretely, a field instance record might look something like this:

| field_instance_id | field_id | value | form_instance_id | form_id |

|---|---|---|---|---|

| field_inst_456 | field_weight | "58.3" | form_inst_123 | form_vitals |

Note that in addition to the foreign keys mentioned above, the field instance record above also has a denormalized reference to the parent form_id, even though it could theoretically be derived from the form_instance_id by joining the form instance record. We include it because this denormalization enables an even stronger level of referential integrity:

- The

(form_id, field_id)pair on the instance is used as a composite foreign key to thefieldstable. This ensures that theform_idalways refers to the form that truly contains the current field. - The

(form_id, form_instance_id)pair is used as a composite foreign key to theform_instancestable. This ensures that the form instance is actually an instance of the correct parent form.

That is, together, these constraints — along with similar constraints at the event and form levels — ensure that the event instance → form instance → field instance hierarchy always matches the original user-defined event → form → field hierarchy, providing a further guarantee that attribute instances are always in a consistent and correct state.

Type Safety

Adding these relational constraints to the basic EAV schema helps to enforce some structure on what would otherwise be a completely unconstrained data model. However, while the constraints ensure that our attributes have the correct hierarchy, they don't guarantee that the values will be the correct type, since we store all values as JSONB (this allows us to use a single, polymorphic "value" column for all field types). It would be very bad if a "Weight" value were a string or a "Yes/No" answer were a number when we retrieved it from the database.

This is where Zod comes in. Every read and write path to an attribute (i.e., a form field) needs to pass the Zod schema corresponding to the field type before it is returned or saved. For instance, if a field is configured to be of type "number," the value must satisfy Zod's number schema in order to be stored. On the read path, we use Zod schemas to validate and parse the value back into the correct type. At a high level, the write path looks something like this:

const schema = getSchemaForFieldType(fieldType);

const { data: parsedValue } = schema.safeParse(JSON.parse(newValue));

if (parsedValue) {

await updateFieldInstance(fieldInstanceId, { value: parsedValue });

}

Altogether, relational constraints and Zod type enforcement make our EAV model significantly safer than the naive implementation.

Limitations

Nonetheless, this approach has limitations:

- There is only a single level of type safety on the value column, enforced by the Zod schema at the application layer. There is limited database-level type enforcement (mostly, JSON syntax checking).

- In general, EAV is notorious for being an anti-pattern. Among other issues, if not implemented carefully, it can lead to referential integrity issues and makes queries more complex.

To address the first limitation, we could potentially install the pg_jsonschema extension and guarantee attribute type safety at the database level with CHECK constraints. For the time being, though, Google Cloud SQL does not support this extension, so it would only be an option if we moved to self-managed PostgreSQL. That, in turn, would trade off automatic backups, point-in-time recovery, encryption at rest, and other compliance-critical features that we take advantage of as part of the managed Cloud SQL service — so it is not a viable option right now. In the meantime, enforcing the Zod schema on both the write and read paths means that we are instantly alerted (via our error monitoring system) if any value does not satisfy its configured type.

With respect to the second limitation, in addition to the multiple levels of constraints we add to the base EAV schema, note that subject data is the only place where we use the EAV pattern. All of the other data in our application — user authentication, study metadata, etc. — is stored in the traditional relational way, because those use cases are not subject to the same requirements for flexibility and dynamism as clinical trial data. As a result, subject data is the only place where we need to mitigate the risks and pay the additional technical costs associated with EAV (e.g., by writing extra code and creating custom views to make queries against study data more efficient).

In short, within our PostgreSQL foundation, we've built a constrained escape hatch to flexibility and dynamism where it is absolutely necessary, but otherwise we rely on the strong guarantees of the relational model to ensure data integrity and correctness everywhere else.

Looking Ahead

There's a lot more we can share about our approach — including how EAV enables us to ergonomically handle study data model evolution and even study schema changes after first subject in — but we'll save that for another post.

In the meantime, reach out if you're also interested in solving gnarly data modeling problems in a very real, regulated domain. We'd love to hear from you.

Footnotes

-

In fact, clinical data is one of the canonical use cases for the EAV pattern. See also:

Dinu, V., & Nadkarni, P. (2007). Guidelines for the effective use of entity-attribute-value modeling for biomedical databases. International Journal of Medical Informatics, 76(11-12), 769–779. https://doi.org/10.1016/j.ijmedinf.2006.09.023 ↩